Private, Systematic LLM Evaluation

Self-hosted benchmarking studio for comparing language models with blind A/B/C testing, configurable judges, and statistical analysis. Your data never leaves your infrastructure.

Choosing the wrong LLM costs time and money

Manual testing is slow, evaluation is biased, and your data shouldn't leave your infrastructure.

Manual Testing is Slow

Copy-pasting prompts between different LLM platforms, manually comparing responses, and trying to remember which model performed better wastes hours of valuable development time.

Evaluation Bias

When you know which model generated which response, unconscious bias affects your judgment. Your team's preferences and expectations skew results, leading to poor model selection decisions.

Data Privacy Concerns

Sending proprietary prompts and evaluation data to third-party benchmarking services creates compliance risks. Legal teams need assurance that sensitive data stays on your infrastructure.

BeLLMark solves this in three ways

Self-Hosted Privacy

Run BeLLMark on your own infrastructure. Your prompts, API keys, and evaluation results stay on your infrastructure. Supports compliance workflows for regulated industries including healthcare and finance.

Blind Evaluation

Responses are shuffled as A/B/C before judging. Neither you nor your judges know which model generated which response until after scoring, eliminating unconscious bias.

Configurable Judges

Use any LLM as a judge with custom evaluation criteria. Compare GPT-4, Claude, Gemini, and local models side-by-side with AI-generated or custom scoring rubrics.

How It Works

Configure Models

Add API keys for OpenAI, Anthropic, Google, or local LM Studio endpoints.

Define Questions

Write custom prompts or use AI to generate domain-specific test cases.

Run Benchmark

Models generate responses in parallel, then judges evaluate them blindly.

Analyze Results

View charts, ELO rankings, statistical significance tests, and bias analysis. Export to HTML, JSON, CSV, PPTX, or PDF.

Everything you need for systematic LLM evaluation

Blind A/B/C Testing

Responses shuffled before judging, with revealed mappings only after scoring. Eliminates model bias and ensures objective evaluation.

LLM-as-Judge

Use one or more language models as judges with customizable criteria. Choose separate scoring or direct comparison modes.

AI Criteria Generation

Let an LLM design evaluation rubrics for your specific use case, or write custom scoring criteria from scratch.

9 LLM Providers

OpenAI, Anthropic, Google, Grok, DeepSeek, GLM, Kimi, Mistral, and local LM Studio models. Add your own providers easily.

Statistical Analysis

Bootstrap CI, Wilcoxon significance tests, Cohen’s d effect sizes, ELO ratings, bias detection, and judge calibration — all built into the dashboard.

Rich Exports

Export to HTML reports, JSON, CSV, consulting-grade PPTX, or PDF — all formats include statistical summaries and confidence intervals.

Evaluation methodology you can defend

Enterprise-grade statistical rigor from blind evaluation to bias detection

Blind Evaluation

Responses are shuffled and assigned blind labels (A, B, C...). Judges evaluate without knowing which model produced which response. Mapping is revealed only after all scoring is complete.

Transparent Rubrics

AI generates evaluation criteria from your use case description, or write custom rubrics from scratch. You review and approve before any benchmark runs. Your criteria, your standards.

Auditable Reasoning

Every judge score includes written reasoning. Expand any result to read exactly why a judge scored a response the way it did. Multi-judge mode provides confidence through agreement.

Models

& Blind

Evaluation

Aggregation

Analysis

Report

Scoring System

Each response is scored 1–10 per criterion with defined anchors, then aggregated via weighted arithmetic mean.

Scores follow a three-tier scale: 1–3 (poor), 4–6 (acceptable), 7–10 (good to excellent). You define the criteria that matter for your use case — accuracy, completeness, tone, or any custom dimension. Each criterion carries a configurable weight.

Aggregation follows a three-stage pipeline: per-criterion scores are averaged across judges, then weighted by criterion importance, then averaged across questions to produce a final model score.

Confidence & Significance

Statistical tests tell you whether score differences are real or could be due to chance.

Wilson Score CI — confidence intervals on win rates that work correctly even with small sample sizes (unlike normal approximation).

Bootstrap CI — 1,000-resample confidence intervals on score differences between models, giving you a credible range for "how much better is Model A than Model B."

Wilcoxon Signed-Rank — non-parametric pairwise significance test. Doesn't assume scores are normally distributed. Reports p-values for every model pair.

Holm–Bonferroni Correction — adjusts p-values when comparing multiple models simultaneously, preventing false positives from repeated testing.

Statistical Power Analysis — estimates whether your sample size is large enough to detect meaningful differences, so you know if "not significant" means "no difference" or "not enough data."

Effect Sizes & Comparisons

Beyond "is it significant?" — how large is the difference, and how do all models rank together?

Cohen's d — standardized effect size for every model pair. Labeled as small (0.2), medium (0.5), or large (0.8+) so you can judge practical significance, not just statistical significance.

Pairwise Comparison Matrix — every model compared against every other model with significance indicators, effect sizes, and confidence intervals in a single table.

Friedman Test — non-parametric test for overall ranking significance across all models simultaneously.

Bias Detection

Automatically detects four types of evaluation bias that could undermine your results.

Position Bias — does the order in which responses are presented affect scores? Detected by comparing scores across presentation positions (A vs B vs C).

Length Bias — do longer responses get higher scores regardless of quality? Measured via Spearman rank correlation (ρ) between response length and score.

Self-Preference Bias — does a judge model favor responses from itself or its own provider? Flagged when detected.

Verbosity Bias — related to length bias but focused on token count relative to content density. LC (length-controlled) win rates correct for this by adjusting scores for response length.

Judge Calibration

Measures how consistent and reliable your judges are, both individually and as a group.

Cohen's Kappa (2 judges) / Fleiss' Kappa (3+ judges) — inter-rater agreement beyond what would be expected by chance. Values above 0.6 indicate substantial agreement; below 0.4 suggests judges are evaluating differently.

ICC (Intraclass Correlation) — measures the consistency of absolute score values across judges, not just ranking agreement.

Per-Judge Reliability — individual reliability scores identify if one judge is consistently an outlier, so you can investigate or remove unreliable judges.

ELO Rating System

Track model performance across multiple benchmark runs with an adaptive rating system.

Bayesian Adaptive K-Factor — new or uncertain models have higher K-factors (ratings change more per run), while established models have lower K-factors (ratings are more stable). This means a single anomalous result won't dramatically change a well-established model's ranking.

Cross-Run Tracking — ratings update automatically when any benchmark run completes. The global leaderboard reflects cumulative performance across all your evaluations.

Rating History — per-model rating history charts show how a model's ranking has evolved over time as more benchmarks are run.

See BeLLMark in action

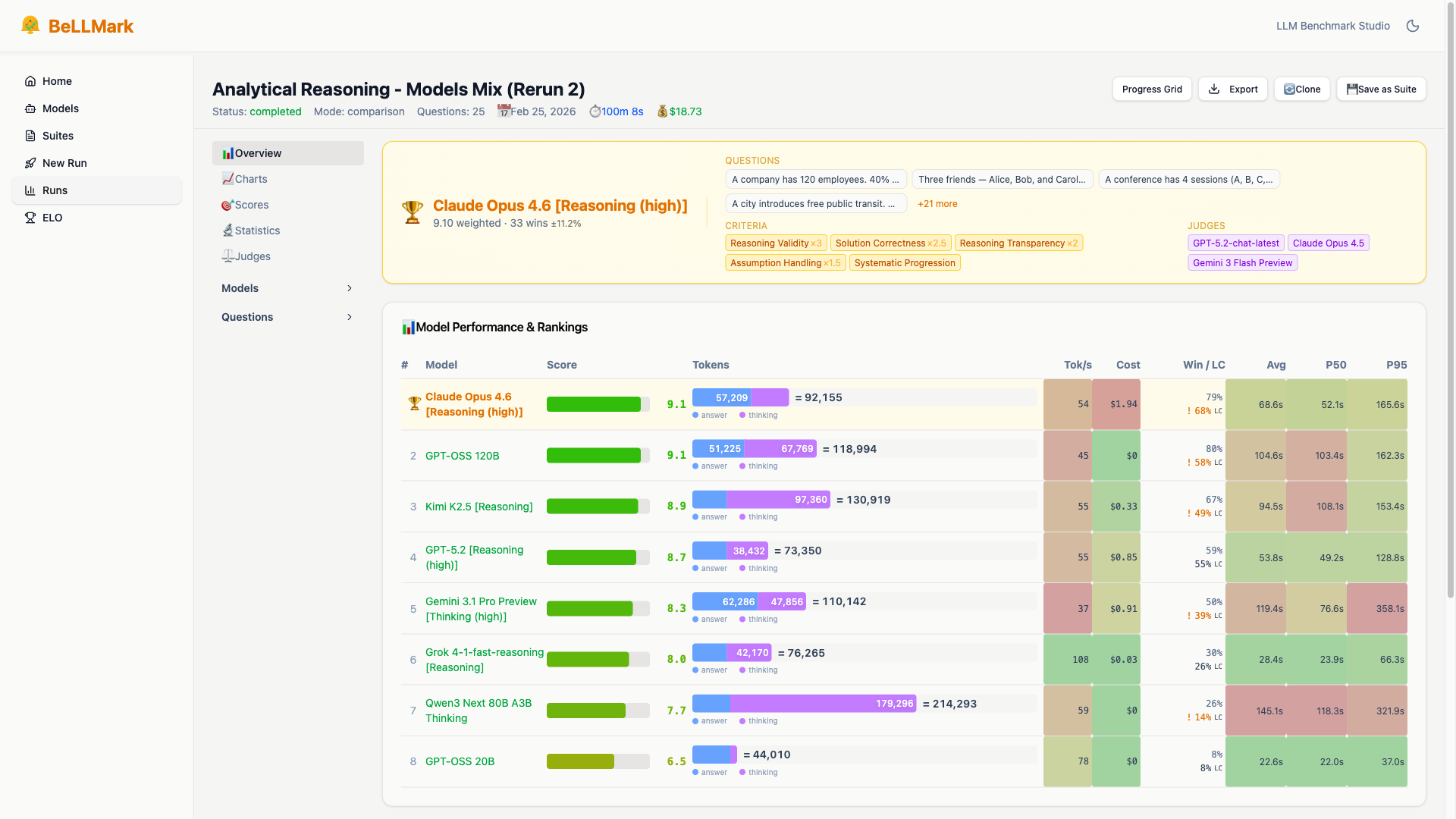

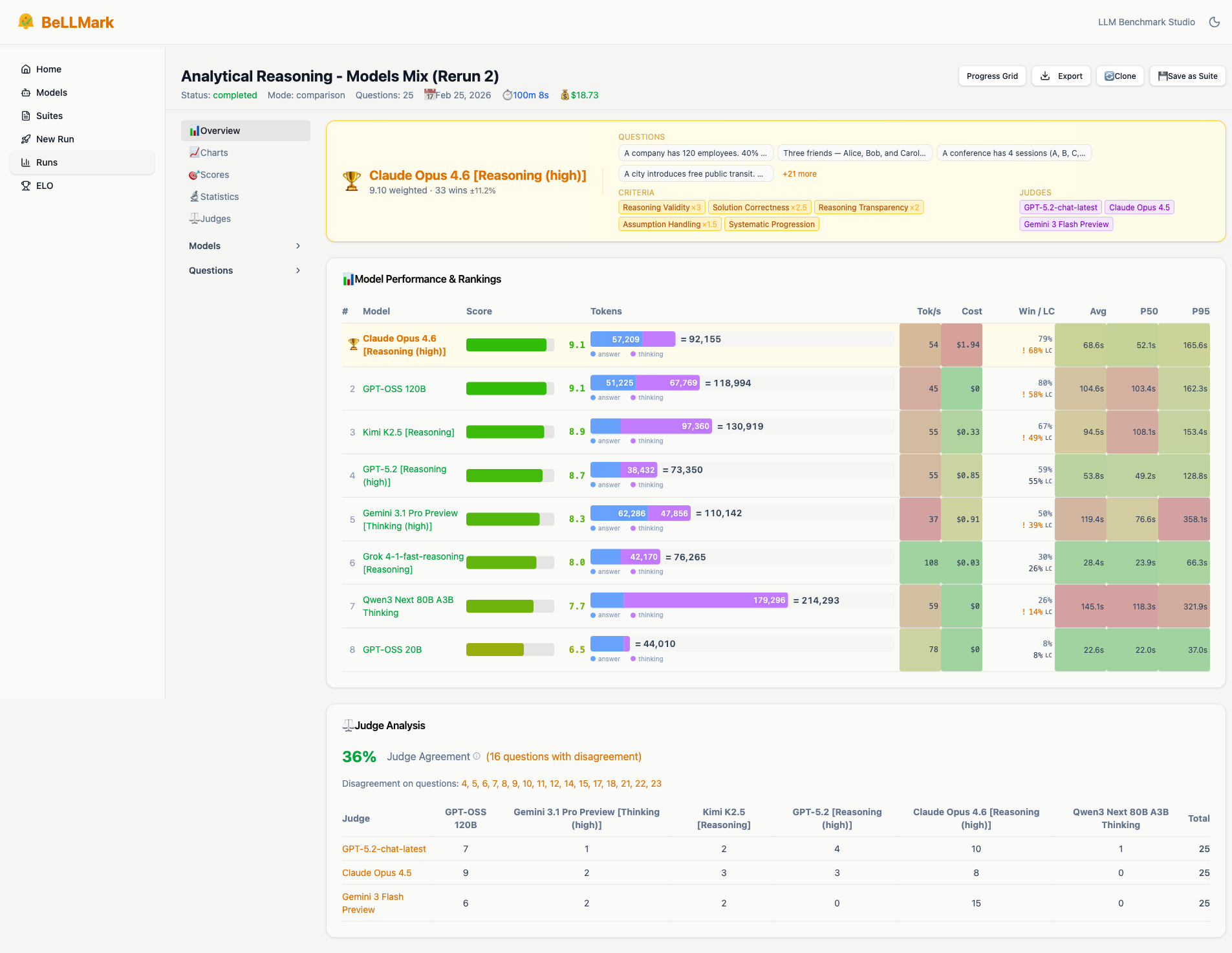

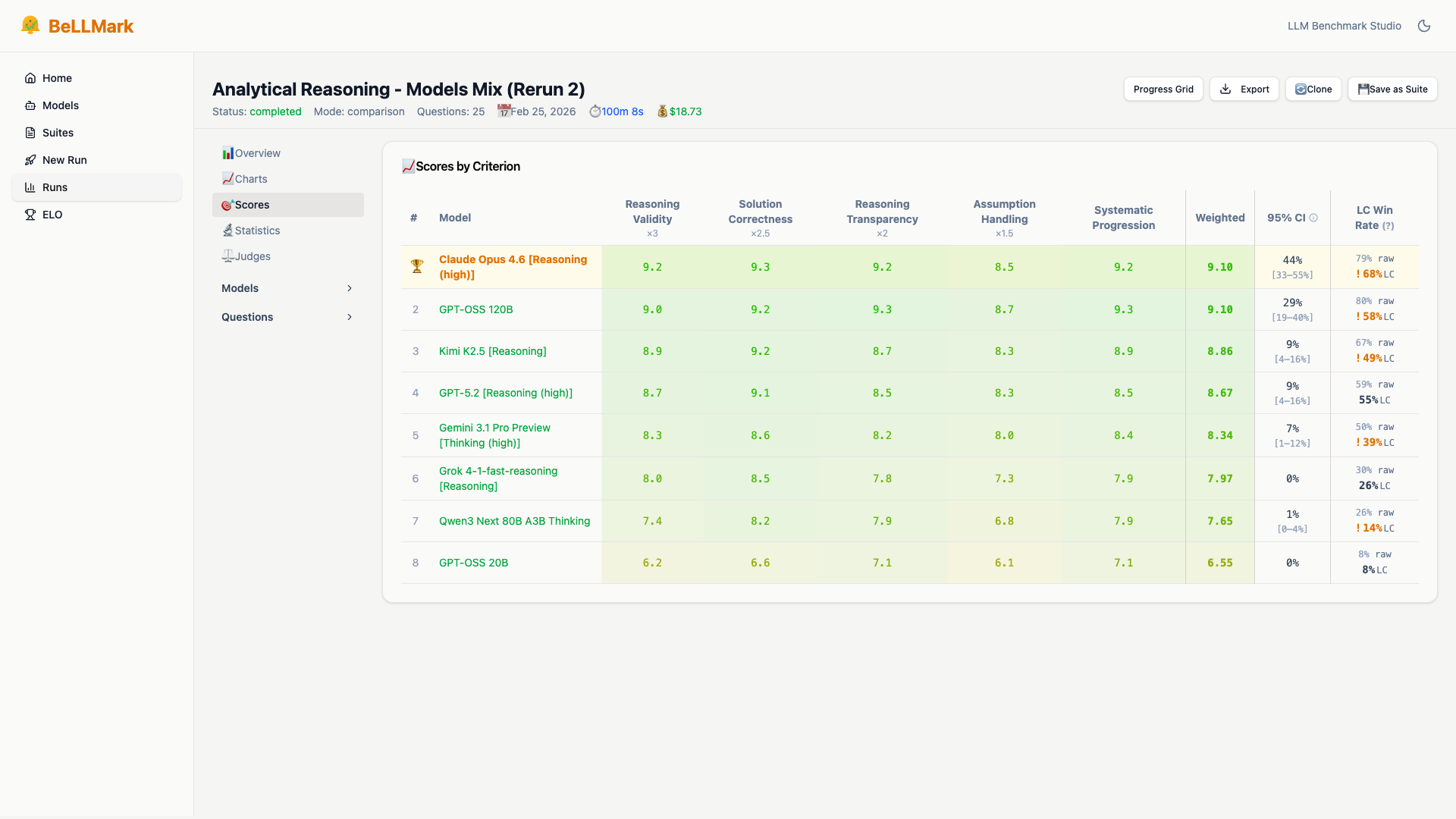

Real results from a completed benchmark — 8 models, 25 analytical reasoning questions, 3 independent judges. Explore the full analysis below.

Detailed results & per-criterion scoring

Full benchmark overview with model rankings, token usage, and cost breakdowns — plus granular scores by criterion with confidence intervals and win rates.

Ships with 5 research-backed benchmark suites

Ready-to-use evaluation sets covering reasoning, writing, compliance, calibration, and domain expertise. Import them with one click or download the JSON.

Built for teams who need to make informed AI decisions

Compliance & Legal

Evaluate LLM accuracy on legal reasoning, contract analysis, and regulatory interpretation without sending client data to external benchmarking services.

- Test contract summarization accuracy

- Compare legal reasoning capabilities

- Validate compliance advisory quality

- Keep sensitive data on-premises

AI Consultants

Provide clients with objective, data-driven model recommendations backed by systematic benchmarking on their specific use cases.

- Generate client-specific test cases

- Deliver professional HTML reports

- Compare cost vs. performance tradeoffs

- Justify model selection decisions

Engineering Teams

Make informed decisions about which LLM to use in production by testing on real prompts before committing to API contracts.

- Test local vs. cloud model quality

- Validate prompt engineering changes

- Compare reasoning model performance

- A/B test prompt templates

Why teams choose BeLLMark

Self-Hosted

Run on your infrastructure. Your data stays on your infrastructure. Supports compliance workflows through self-hosting and zero telemetry.

No Subscription

One-time purchase, lifetime license. No recurring fees, no per-seat charges, no usage limits.

Local Models

Test local LM Studio models alongside cloud providers. Compare cost vs. quality tradeoffs systematically.

Accessible

Clean web interface, no technical configuration needed. Non-technical stakeholders can run benchmarks independently.

Simple, transparent pricing

- All current and future features

- Self-hosted on your infrastructure

- 9 LLM provider integrations

- Blind A/B/C evaluation

- AI criteria generation

- Real-time progress tracking

- Statistical analysis & ELO ratings

- HTML/JSON/CSV exports

- Free updates for life

- Best-effort email support

BeLLMark: €799 once — break even in 3 months

30-day money-back guarantee — if BeLLMark doesn't work for your use case, email us for a full refund.

Run your first blind evaluation in 30 minutes. Free for personal, educational, and non-commercial use — upgrade when you commercialize. Same features during evaluation, no time limits.

Frequently Asked Questions

What does "per legal entity" mean?

One license covers unlimited users within a single legal entity (corporation, LLC, nonprofit, etc.). If you have multiple subsidiaries or separate legal entities, each needs its own license. Freelancers and sole proprietors need one license for their business use.

Is BeLLMark open source?

BeLLMark is source-available under the PolyForm Noncommercial 1.0.0 license. You can view, modify, and use the code for free for personal, educational, and non-commercial purposes. Commercial use requires a paid license (€799 one-time per legal entity, €499 introductory during the first 60 days). See our licensing terms for what the commercial license covers.

Do I need technical skills to use BeLLMark?

No programming knowledge required! BeLLMark has a clean web interface. If you can use a web browser and have API keys for LLM providers (like OpenAI or Anthropic), you can run benchmarks. Installation requires basic command-line familiarity (Docker or Python/Node.js).

How do I install BeLLMark?

Three installation options: (1) Docker Compose (recommended, one command), (2) Manual setup with Python backend + Node.js frontend, or (3) Production build served from a single backend process. Full instructions in the GitHub repository. Typical setup time: 5-10 minutes.

What LLM providers are supported?

BeLLMark supports OpenAI (GPT-4, GPT-5, o1), Anthropic (Claude Opus/Sonnet/Haiku), Google (Gemini 2.5/3), Grok, DeepSeek, GLM, Kimi, Mistral, and local LM Studio models. The architecture is modular—adding new providers is straightforward by implementing the OpenAI-compatible endpoint pattern.

How do updates work?

All updates are free for life — including future major versions. Pull the latest code from GitHub whenever a new version is released. No subscription fees, no forced upgrade cycles, no license keys to manage. Your commercial license covers all future features and improvements.

What about my API keys and data privacy?

Everything runs on your infrastructure. API keys are encrypted at rest in your local SQLite database. Prompts, responses, and evaluation results stay on your server. BeLLMark sends zero telemetry and makes no outbound calls except to the LLM providers you configure. This architecture supports your compliance goals for frameworks like GDPR and HIPAA — actual certifications depend on your infrastructure setup and LLM provider agreements. Contact us for framework-specific guidance.

How does LLM-as-judge work and how do you validate it?

BeLLMark sends each model's response to a judge LLM along with your evaluation criteria. The judge scores each response on a 1-10 scale per criterion, providing written reasoning for each score. Responses are presented with blind labels (A, B, C) so the judge doesn't know which model produced which response. For validation, use multi-judge mode (multiple LLMs evaluate independently) and check agreement. Multi-judge mode and statistical calibration ensure reliable, reproducible results.

Can we use human raters alongside LLM judges?

Not yet as a built-in feature, but BeLLMark's results are fully exportable (HTML, JSON, CSV, PPTX, PDF) for human review. The recommended workflow: run LLM-as-judge for initial screening, then export the top candidates' responses for human evaluation. Native human evaluation workflows with integrated scoring are on our roadmap.

How do you handle rate limits, failures, and retries?

BeLLMark automatically retries failed API calls up to 3 times with progressive backoff (2s, 5s, 10s delays). It checkpoints before phase transitions (generation → judging) so partial progress is preserved. If an API call fails after all retries, the specific failure is logged and a manual retry button appears in the progress view. Other models and questions continue processing normally.

Do you support role-based access or multiple workspaces?

BeLLMark currently runs as a single-user application. For team use, we recommend deploying behind your existing authentication (VPN, reverse proxy with SSO, or network-level access control). Multi-user support with role-based access and team workspaces is on our roadmap. All benchmark data is stored in a single SQLite database that can be shared across the team.

How does BeLLMark compare to other evaluation tools?

vs. CLI tools (e.g., Promptfoo): BeLLMark provides a visual web interface with blind A/B/C evaluation, real-time progress, and consulting-grade export formats (PPTX, PDF). No YAML configuration required.

vs. Public leaderboards (e.g., Chatbot Arena): BeLLMark runs on your infrastructure with your own questions and criteria. Your proprietary prompts never leave your servers.

vs. LLMOps platforms: BeLLMark is a focused evaluation studio, not a production monitoring tool. One-time purchase, no subscription, no usage limits.